Introduction: The Hidden Costs of AI Infrastructure

As enterprises accelerate AI adoption, many overlook one critical factor: the data center itself. Power-hungry GPUs, legacy network gear, inefficient layouts, and outdated cooling systems silently eat into ROI. But what if a smarter design could flip the script?

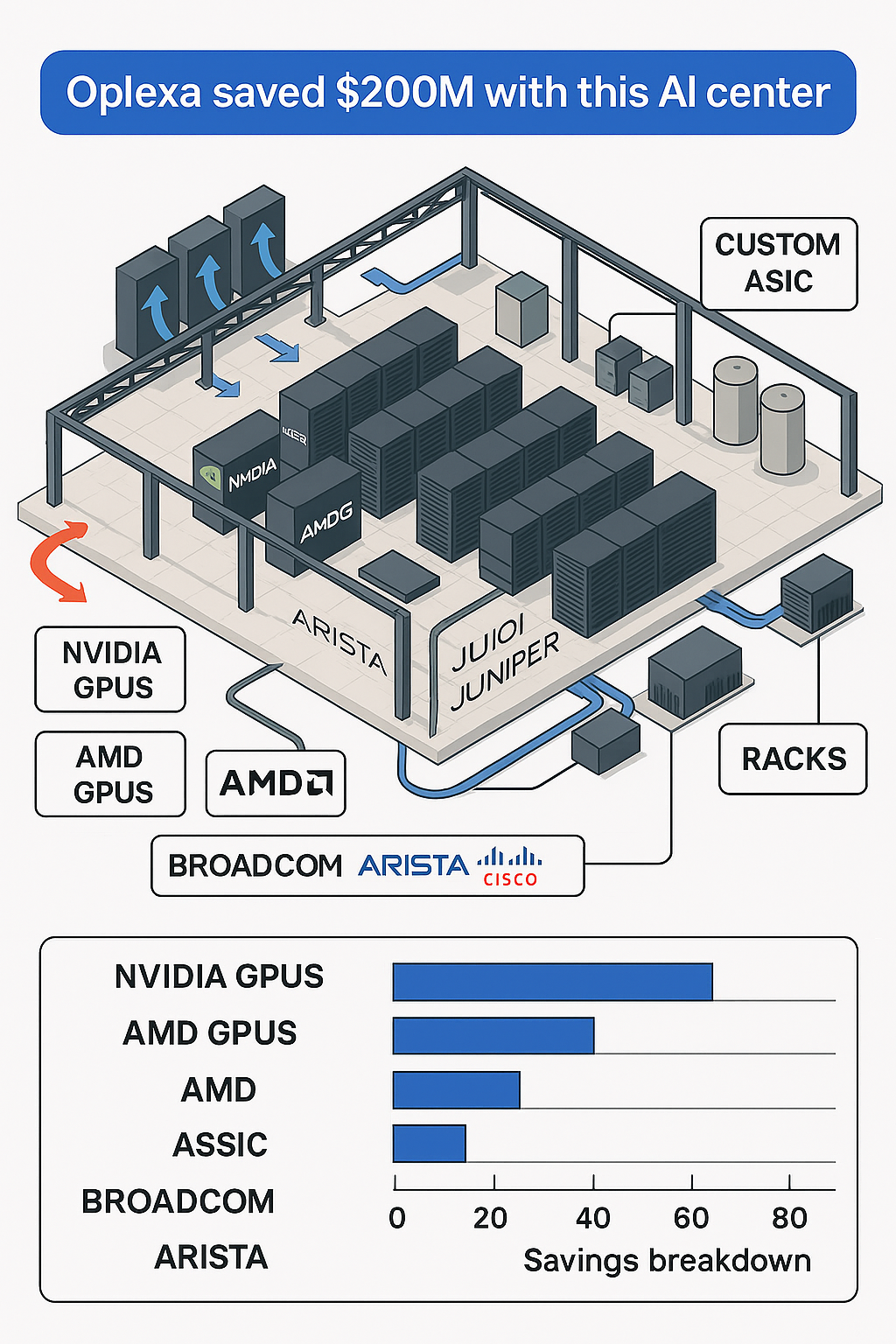

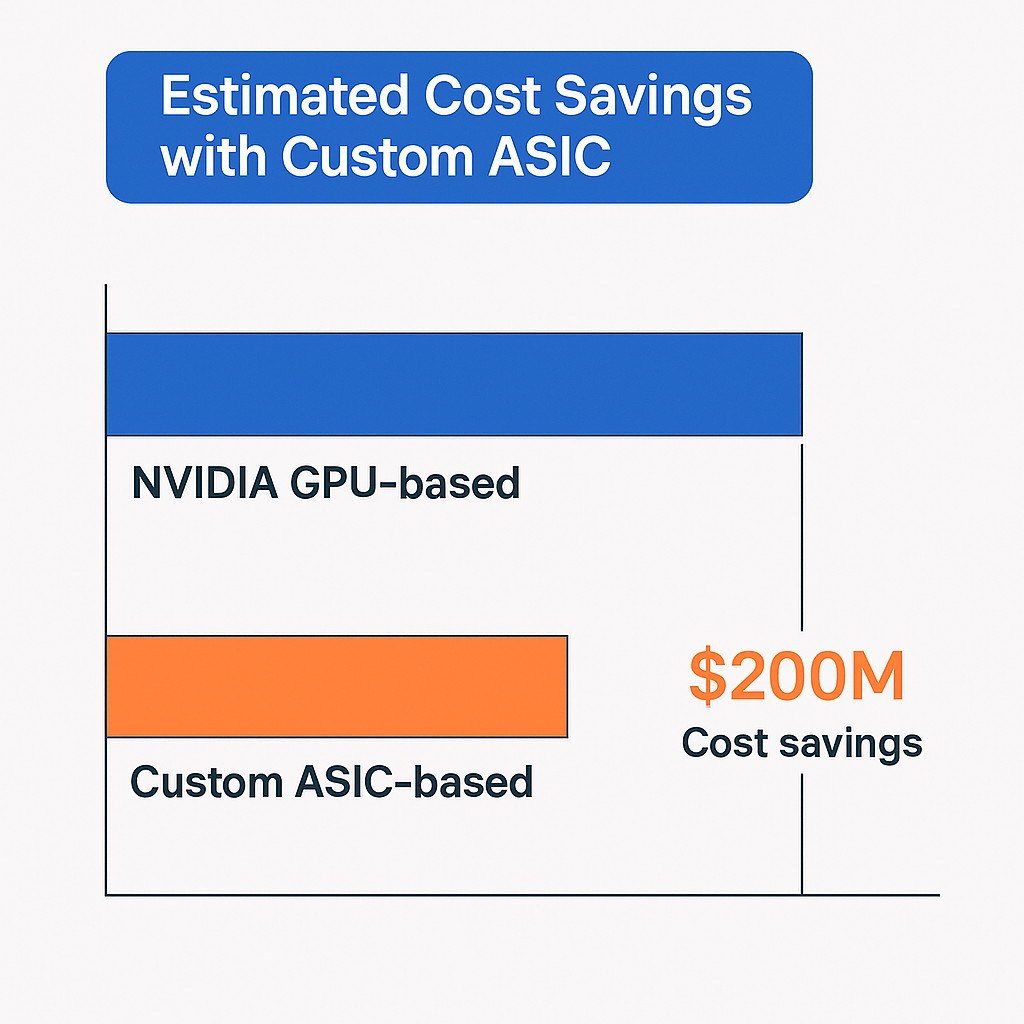

At Oplexa, we reimagined our AI data center—and the result was staggering: $200 million in cost savings while boosting performance and scalability.

The Challenge: AI at Scale Isn’t Plug and Play

AI workloads are uniquely demanding:

- Compute-intensive models like LLMs require dense GPU clusters

- High-speed, low-latency networking is essential

- Cooling systems must handle 2x–3x traditional heat loads

- Vendor lock-in and proprietary hardware inflate costs

Without a purpose-built architecture, enterprises face runaway costs and system bottlenecks.

The Oplexa Blueprint: An AI-Optimized Data Center

To solve this, our engineers and analysts designed a data center architecture guided by four principles:

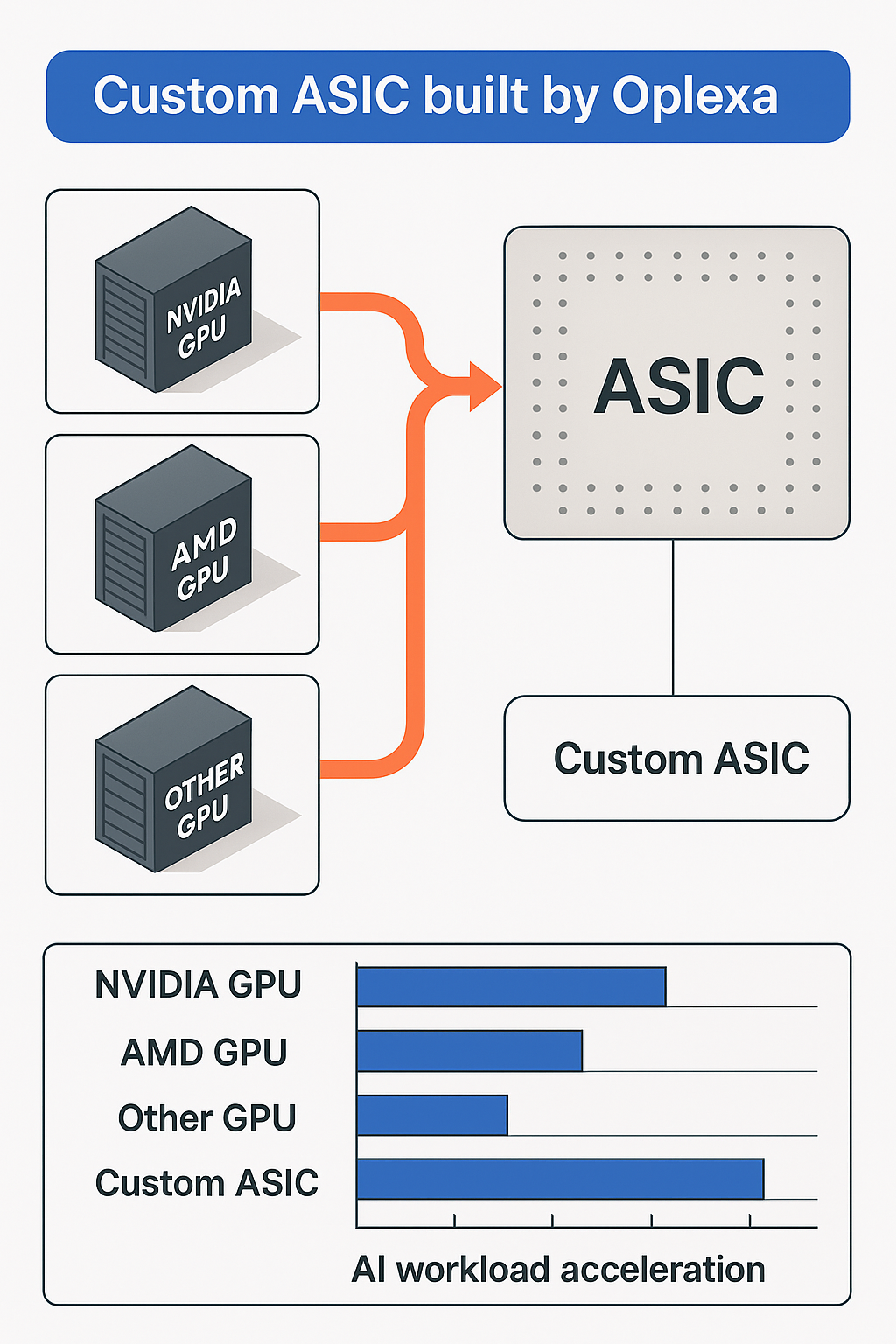

1. Diversified Compute Stack:

- NVIDIA GPUs for high-performance training

- AMD GPUs for competitive inference workloads

- Custom ASICs where cost-per-watt optimization is critical

2. Open Network Fabric:

- Core switches from Arista, Juniper, and Cisco

- Optimized spine-leaf architecture for high throughput

- Software-defined overlays for dynamic AI workload routing

3. Rack-Level Efficiency:

- Modular design to allow hot/cold aisle containment

- Reduced cabling complexity and better airflow

- Dynamic workload orchestration at rack level

4. Vendor-Agnostic Integration:

- Broadcom and custom NICs

- Storage tiering with open formats

- Zero vendor lock-in

Quantified Impact: How We Saved $200M

| Optimization Area | Savings Contribution | Key Factors |

|---|---|---|

| GPU Selection Strategy | $60M | Mix of NVIDIA, AMD, and ASICs |

| Network Architecture | $40M | Open ecosystem and SDN efficiency |

| Cooling Optimization | $50M | Heat reuse, airflow modeling, containment |

| Rack Design & Layout | $30M | Improved density, power distribution |

| Vendor Consolidation | $20M | Reduced software licensing & support overhead |

Total: $200M+ in cumulative capex + opex savings over 5 years.

Lessons for the Enterprise CIO or CAIO

This isn’t just about cutting costs. It’s about building a future-ready AI foundation:

- Plan for multi-GPU and multi-vendor environments

- Network matters: Don’t underestimate latency at scale

- Think beyond servers—racks, airflow, and energy impact AI success

- Avoid vendor lock-in with open standards and modularity

How Oplexa Can Help

Whether you’re:

- Evaluating AI infrastructure investments

- Choosing between cloud vs. on-prem

- Comparing GPU/ASIC vendors

- Designing a green data center

Our market research reports and AI consulting team can guide your strategy with real-world data and engineering insight.

Conclusion: Smart Infrastructure, Smarter ROI

AI isn’t just about algorithms—it’s about the environment they run in. The Oplexa AI data center proves that with the right architecture, massive savings and high performance can go hand in hand.

Request for reports or schedule a strategy session at www.oplexa.com