In the last decade, the world has seen data centers evolve from passive compute warehouses into intelligent, distributed nerve centers of the digital economy. Today, with the explosive growth of AI, especially large-scale models and real-time inferencing, the transformation has accelerated beyond recognition. AI is no longer just a workload; it’s a design principle—driving every layer of infrastructure, from silicon to software.

As the stewards of this transformation, we stand at a pivotal moment: building an AI-native infrastructure that is not only scalable and efficient, but also sustainable and secure.

Why AI is Reshaping the Core of Data Center Infrastructure

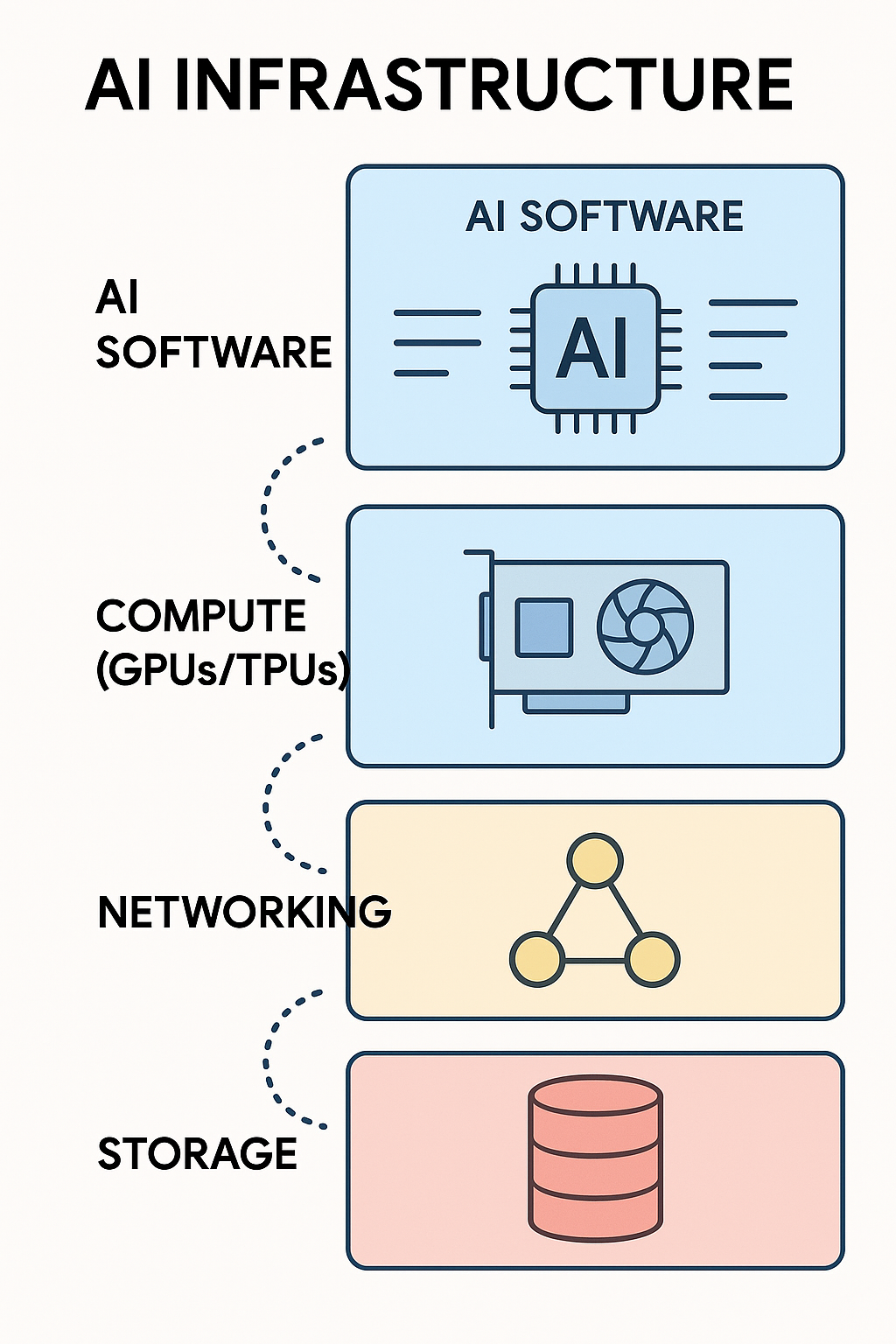

At the heart of every generative AI tool, LLM, and real-time AI application lies a complex symphony of infrastructure:

-

High-density compute (GPUs/TPUs)

-

Ultra-fast networking fabrics

-

Massive parallel storage systems

-

AI-aware software orchestration layers

Traditional data center models—optimized for CPU-centric, transactional workloads—are being outpaced by the demands of AI. The sheer intensity of model training (requiring thousands of accelerators working in tandem) and inferencing at the edge (requiring low latency and high throughput) means we must rethink architecture, power distribution, thermal design, and even code deployment models.

From Infrastructure to Intelligence: Software’s Role in Scaling AI

Building the hardware is just the beginning. The real unlock comes from intelligent software orchestration that makes AI infrastructure elastic, efficient, and developer-friendly. Our software engineering teams are focused on:

-

AI-optimized schedulers that allocate GPU/TPU workloads in real-time

-

Self-healing infrastructure that predicts hardware failures using AI itself

-

End-to-end observability stacks that monitor carbon efficiency, thermal load, and performance

-

Federated testing frameworks that simulate model behavior across thousands of edge nodes

We are building systems that can not only handle today’s AI demands but can anticipate tomorrow’s breakthroughs.

Global Scale, Local Efficiency

At Google, our platform infrastructure supports services used by billions. That means our AI-ready data centers must operate seamlessly across continents—with regional compliance, local latency targets, and global energy efficiency goals.

Through innovations like liquid cooling, custom silicon (TPUs), and automated power-aware job placement, we’re creating infrastructure that is high-performance but energy-aware. We’re aligning with the future of green AI—scaling responsibly while optimizing for performance per watt.

The Engineer’s Engineer Era

Our mission is not just to build systems that run AI workloads—but to empower engineers and researchers to build faster, deploy smarter, and scale securely. That’s why we are focused on developer-centric tooling, open hardware/software stacks, and a culture of cross-functional collaboration.

We are hiring not just coders, but system thinkers. Not just testers, but quality architects. Because building for AI at this scale is no longer a linear discipline—it’s a fusion of software engineering, hardware design, systems reliability, and user empathy.

What’s Next?

Looking ahead, the line between software and hardware will continue to blur. AI models will increasingly require co-design with infrastructure, where training efficiency, model architecture, and deployment strategy evolve hand-in-hand.

We believe the future lies in:

-

Composable AI infrastructure — deployable like microservices

-

Real-time adaptive inference networks — for autonomous systems

-

Hyper-personalized edge AI — powered by federated data centers

Our challenge—and opportunity—is to shape this future while ensuring that every advancement is sustainable, secure, and universally beneficial.

At the edge of innovation, it’s not enough to follow the curve. We must bend it.

If you’re passionate about shaping the AI-first world, we’re building the platform that makes it possible.

Final Thoughts

Data centers were once the invisible backend of the internet. Today, they are the active brain of AI innovation. As we enter this new frontier, software engineering will be the differentiator—not just in how infrastructure is built, but in how intelligence is scaled.

Let’s build the future—intelligently.

Schedule a strategy session at www.oplexa.com