The world’s largest technology companies have collectively committed more than $660 billion to build the AI data centers that will power the next decade of artificial intelligence. Yet across Virginia, Texas, and Northern Europe, the same companies are finding themselves blocked — not by regulators, not by chip shortages, but by a simple, irreducible constraint: there is not enough electricity to run what they want to build.

Key Statistics at a Glance

The Scale of the AI Data Center Power Crisis

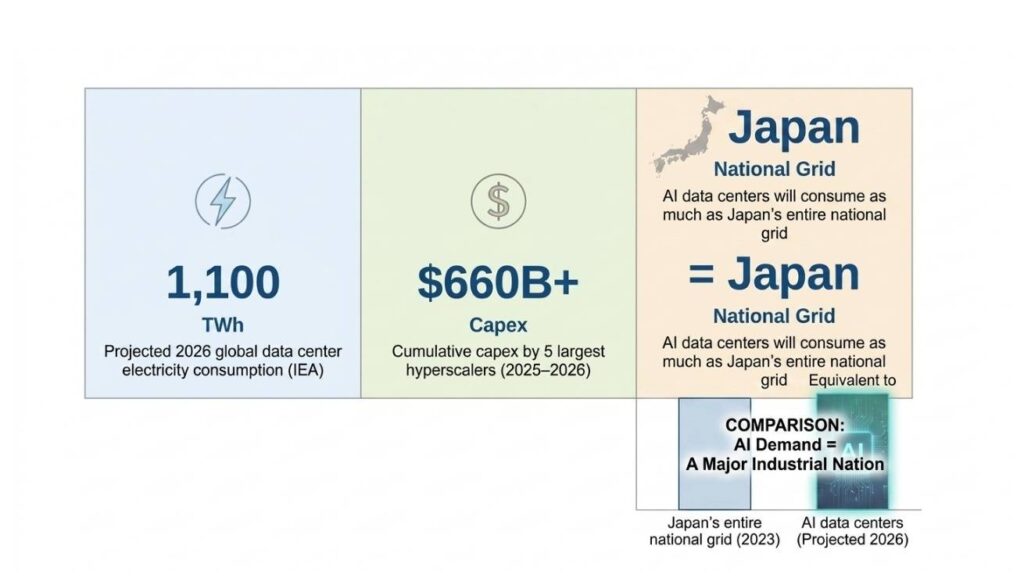

The AI data center power crisis is no longer a future concern — it is the defining infrastructure constraint of 2026. According to the International Energy Agency (IEA), global data center electricity consumption will reach approximately 1,100 TWh in 2026 — a figure equivalent to Japan’s entire national electricity consumption. This is not a projection of distant risk; it is a present-day operational challenge for every major AI infrastructure operator.

What makes this crisis structurally different from previous technology infrastructure bottlenecks is the speed of escalation. AI model training and inference workloads — particularly large language models and multimodal AI systems — are dramatically more power-intensive than conventional cloud computing. A single hyperscale AI training cluster can consume as much power as 50,000 average homes. When you multiply that demand across thousands of clusters, the cumulative electricity requirement becomes a national-scale problem.

| ⚡ Key Insight: The AI data center power crisis is not a capital problem. The five largest hyperscalers have already committed over $660 billion in capex. It is a grid capacity problem — and capital cannot build new transmission lines or generate more electricity on the timeline AI deployment demands. |

$660 Billion in Capex Meets the Power Wall

The five largest hyperscalers — Microsoft, Google (Alphabet), Amazon (AWS), Meta, and Oracle — have collectively announced AI infrastructure capital expenditure exceeding $660 billion for 2025–2026. This unprecedented level of investment is driving the construction of new AI data center clusters across the United States, Europe, and Asia. Yet an escalating number of these projects are running into a constraint that no amount of capital can easily overcome: there is insufficient electricity available at the grid level.

The economics of AI data center construction have historically followed a straightforward pattern — secure land, secure permits, deploy capital, and go live. The AI data center power crisis inverts that model. Today, hyperscalers must first secure power purchase agreements (PPAs) and confirm grid interconnection timelines before committing to site selection. In many markets, interconnection queues for large industrial loads extend five to seven years — timelines that are fundamentally incompatible with the pace of AI deployment.

Virginia: The Epicenter of the AI Data Center Power Crisis

Nowhere is the AI data center power crisis more visible than in Northern Virginia, which hosts the largest concentration of data center capacity on Earth. The region — sometimes called “Data Center Alley” — accounts for roughly 70% of global internet traffic routing and serves as the backbone of major cloud providers’ US East operations.

As of late 2025 and into 2026, new data center permits in Virginia have been effectively halted. Dominion Energy and other regional utilities have reached the operational limit of what the existing grid infrastructure can support. The situation has forced hyperscalers into a position that would have seemed unthinkable two years ago: choosing between chip production capacity and residential heating. Grid operators have begun requiring AI data center operators to submit detailed load impact assessments and, in some cases, to fund grid upgrades directly.

| ⚡ Key Insight: Virginia’s AI data center power crisis is a leading indicator, not an outlier. Dublin, Frankfurt, Amsterdam, and Singapore are experiencing structurally similar grid capacity constraints, with data center moratoriums either in place or under active consideration. |

How Hyperscalers Are Responding to the AI Power Crisis

1 Nuclear Energy Partnerships

Facing decade-long timelines for new transmission infrastructure, several hyperscalers have moved aggressively into nuclear energy agreements. Microsoft’s deal to restart Three Mile Island Unit 1 was the highest-profile example. Google has signed agreements for Small Modular Reactor (SMR) capacity from Kairos Power, while Amazon has announced nuclear-focused clean energy partnerships for dedicated AI data center power supply. Nuclear is the only dispatchable zero-carbon energy source that can deliver the gigawatt-scale, 24/7 baseload power that AI data centers require.

2 Geographic Arbitrage

Some hyperscalers are pursuing geographic diversification to access markets with surplus grid capacity. The Nordic countries — particularly Finland, Sweden, and Norway — offer a combination of renewable energy abundance, cold ambient temperatures (reducing cooling costs), and political stability that makes them increasingly attractive. Iceland, with its geothermal-powered grid, is emerging as a strategic alternative for AI data center expansion.

3 On-Site Power Generation

A growing number of AI data center projects are exploring on-site power generation as a solution to grid dependency. This includes:

- Large-scale solar-plus-storage installations

- Behind-the-meter natural gas generation with carbon capture commitments

- Dedicated hydrogen fuel cell arrays (experimental)

- Direct funding of transmission upgrades to accelerate interconnection

AI Factory Economics: When Power Is the Product

The AI data center power crisis is fundamentally reshaping how hyperscalers and infrastructure investors think about the economics of AI factories. In traditional data center economics, power was a cost input — typically accounting for 30–40% of total operating expense (opex). In the emerging AI factory model, power is becoming a primary constraint on revenue. A hyperscale AI training cluster that cannot secure guaranteed power supply cannot generate compute-as-a-service revenue, regardless of how much capital has been deployed in GPU hardware.

Companies that secure long-term power purchase agreements at scale today are building a durable competitive advantage that cannot be replicated simply by deploying more capital. The hyperscaler with the most favorable power position — in terms of price, reliability, and carbon profile — will have a structurally lower cost of AI inference at scale.

| OPLEXA FLAGSHIP REPORT

AI Factory Economics: The $480 Billion Power Equation Deep-dive financial modeling of AI data center power costs, compute economics, and long-term capex strategy through 2035. Includes hyperscaler benchmarking and scenario analysis. Price: $2,499 → oplexa.com/reports/ai-factory-economics |

AI Data Center Cluster Construction Costs in 2026

The capital cost of constructing an AI-optimized hyperscale data center cluster has risen sharply over the past 24 months. This increase reflects not only rising land and materials costs, but the substantial premium now associated with securing sites that have confirmed power interconnection. In markets like Northern Virginia, the effective cost premium for a power-confirmed site can add 20–35% to total development cost versus equivalent sites with uncertain power timelines.

Oplexa’s analysis of AI data center cluster construction costs shows significant regional variation. Nordic markets, despite higher labor costs, often deliver lower total cost of ownership due to free cooling availability and favorable renewable PPA pricing. Middle Eastern markets offer ultra-low land costs but require substantial investment in cooling infrastructure given ambient temperature conditions.

| OPLEXA RESEARCH REPORT

AI Data Center Cluster Construction Costs 2026 Regional cost benchmarking, power-confirmed site premiums, cooling infrastructure economics, and construction cost forecasting for AI hyperscale clusters across 14 global markets. Price: $999 → oplexa.com/reports/ai-data-center-construction-costs |

AI Infrastructure Strategy Through 2035: Planning Beyond the Power Wall

For enterprise technology buyers, infrastructure investors, and government planners, the AI data center power crisis of 2026 is not an obstacle to navigate around — it is a structural feature of the landscape that must be incorporated into long-term planning assumptions. Organizations that treat the power constraint as temporary or solvable through conventional procurement will find their AI infrastructure strategies consistently delayed and over-budget.

The organizations best positioned for the next decade are those that are already treating power as a strategic asset class — securing long-term agreements, investing in distributed infrastructure, and building relationships with grid operators and regulators that will determine interconnection timelines. AI infrastructure strategy through 2035 is, increasingly, an energy strategy.

| OPLEXA RESEARCH REPORT

AI Infrastructure Strategy 2026–2035 Ten-year strategic roadmap covering AI data center site selection, power procurement strategy, hyperscaler competitive positioning, and investment opportunity mapping across global AI infrastructure markets. Price: $999 → oplexa.com/reports/ai-infrastructure-strategy-2026-2035 |

Conclusion

The AI data center power crisis of 2026 represents a fundamental inflection point in technology infrastructure. For the first time in the history of hyperscale computing, capital investment alone is insufficient to drive expansion. The constraint is physical: the electricity grid cannot accommodate the power demands of AI at the pace AI deployment requires.

The $660 billion in hyperscaler capex will eventually be deployed. But the pace, geography, and economics of that deployment will be shaped by a single variable above all others: access to reliable, affordable, large-scale electricity. Organizations — whether hyperscalers, enterprise AI buyers, or infrastructure investors — that internalize this constraint early will navigate the next decade with decisive advantage.

Oplexa Research provides the analytical foundation for that navigation. Our three AI infrastructure reports deliver the cost benchmarking, strategic frameworks, and financial modeling that turn the AI data center power crisis from a headline into a decision advantage.

Frequently Asked Questions

Q: How much electricity will AI data centers consume in 2026?

A: According to the IEA, global AI data center electricity consumption will reach approximately 1,100 TWh in 2026 — equivalent to Japan’s entire national electricity consumption. This represents a dramatic acceleration from approximately 460 TWh in 2022.

Q: How much have hyperscalers committed in AI data center capex?

A: The five largest hyperscalers — Microsoft, Google, Amazon, Meta, and Oracle — have collectively committed over $660 billion in capital expenditure for AI infrastructure as of 2025–2026.

Q: Why are AI data center permits being blocked in Virginia?

A: Virginia’s utility providers have effectively halted new data center permits because cumulative power demand from planned AI data centers exceeds available grid capacity. Hyperscalers must now compete with residential and industrial consumers for limited electricity.

Q: What is the AI data center power wall?

A: The AI data center power wall refers to the point at which electricity grid capacity — not capital investment or chip availability — becomes the primary constraint on AI infrastructure expansion. Even with hundreds of billions committed in capex, hyperscalers cannot build more data centers if the local grid cannot supply the required power.

Q: How are hyperscalers solving the AI power crisis?

A: Hyperscalers are pursuing several strategies: investing in Small Modular Reactors (SMRs), restarting decommissioned nuclear plants, signing long-term renewable PPAs, exploring Nordic and offshore locations, directly funding grid upgrades, and lobbying for accelerated grid permitting reform.